Fine-Tuning vs. RAG: AI Choices for 2026

Introduction: Navigating the AI Landscape in 2026

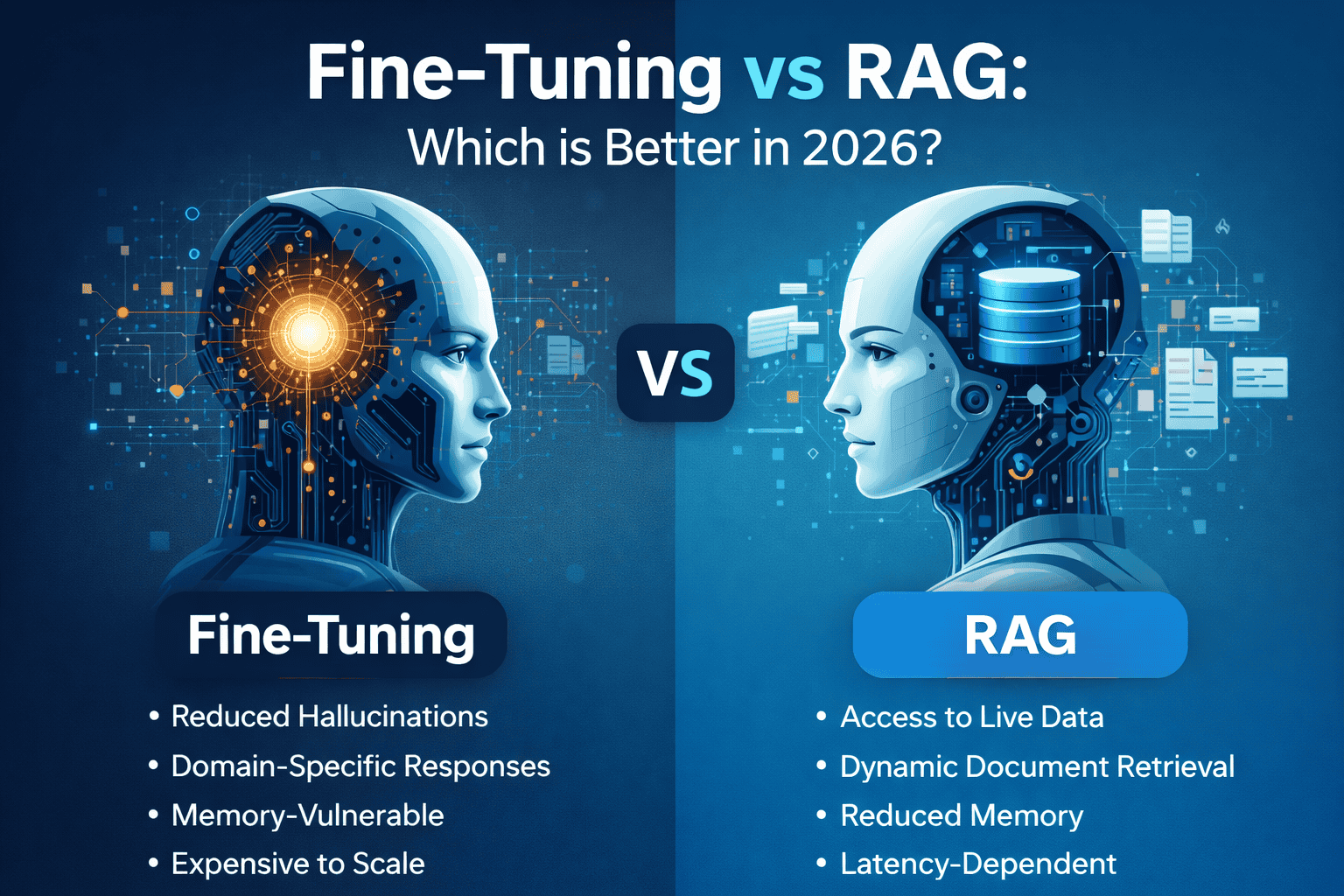

As we advance into 2026, artificial intelligence continues to revolutionize various industries. For companies, startups, individual creators, and developers, understanding the nuances of different AI techniques is crucial for leveraging its potential. Two prominent approaches for tailoring AI models to specific tasks are fine-tuning and Retrieval-Augmented Generation (RAG). This blog post will delve into a comparative analysis of these methods, outlining their strengths, weaknesses, and optimal applications in the evolving technological landscape.

Understanding Fine-Tuning

Fine-tuning involves taking a pre-trained AI model and further training it on a smaller, task-specific dataset. The goal is to adapt the model's existing knowledge to perform better on a particular niche. This method is particularly effective when you have a substantial dataset relevant to your desired outcome.

Benefits of Fine-Tuning

- Improved Performance: Fine-tuning can significantly enhance the performance of a pre-trained model on a specific task compared to using it out-of-the-box.

- Reduced Computational Cost: Leveraging a pre-trained model reduces the need to train from scratch, saving considerable computational resources and time.

- Customization: Allows tailoring the model's behavior to align with specific requirements and datasets.

Limitations of Fine-Tuning

- Data Dependency: Requires a sufficiently large and relevant dataset for effective training. Insufficient data can lead to overfitting and poor generalization.

- Catastrophic Forgetting: The model might "forget" previously learned information during the fine-tuning process, especially if the new dataset is significantly different from the original training data.

- Computational Resources: While less resource-intensive than training from scratch, fine-tuning still requires substantial computational power and time, especially for large models.

Real-World Examples of Fine-Tuning

Consider a company specializing in UI/UX design. They could fine-tune a pre-trained image recognition model on a dataset of user interface screenshots to automatically identify and categorize different UI elements. Another example is a software development firm that fine-tunes a code generation model using its internal codebase to improve code quality and consistency. Hugging Face provides numerous pre-trained models that can be fine-tuned for a wide range of applications.

Exploring Retrieval-Augmented Generation (RAG)

RAG combines the power of pre-trained language models with the ability to retrieve relevant information from an external knowledge base. When a query is received, the system first retrieves relevant documents or passages from the knowledge base and then uses this information to generate a more informed and context-aware response. This approach is particularly useful when dealing with tasks that require up-to-date or highly specific information.

Benefits of RAG

- Access to Real-Time Information: RAG can access and incorporate the latest information from a dynamic knowledge base, ensuring that responses are up-to-date and accurate.

- Reduced Hallucination: By grounding responses in retrieved information, RAG can minimize the risk of the model generating factually incorrect or nonsensical answers (hallucinations).

- Transparency and Explainability: RAG provides the source of information used to generate a response, enhancing transparency and allowing users to verify the accuracy of the information.

Limitations of RAG

- Complexity: Implementing RAG involves managing both a language model and a retrieval system, adding complexity to the overall architecture.

- Retrieval Bottleneck: The performance of RAG is highly dependent on the quality of the retrieval system. Inaccurate or irrelevant retrieval can negatively impact the generated responses.

- Scalability Challenges: Scaling the knowledge base and retrieval system to handle large volumes of data and user queries can be challenging.

Real-World Examples of RAG

Imagine a startup developing a customer support chatbot. By using RAG with a knowledge base of product documentation, FAQs, and support tickets, the chatbot can provide accurate and contextually relevant answers to customer inquiries. Another example is a company building a research tool that allows users to query scientific papers. RAG can be used to retrieve relevant excerpts from the papers and generate summaries, saving researchers time and effort. Pinecone and Weaviate are popular vector databases used for implementing RAG systems.

Fine-Tuning vs. RAG: A Detailed Comparison in 2026

Choosing between fine-tuning and RAG depends on the specific requirements of the application. Here's a comparative overview:

- Data Availability: If you have a large, high-quality dataset specific to your task, fine-tuning might be the better option. If you need to incorporate real-time or external knowledge, RAG is more suitable.

- Task Complexity: For tasks that require reasoning over a fixed domain of knowledge, fine-tuning can be effective. For tasks that require accessing and integrating information from a dynamic knowledge base, RAG is preferable.

- Update Frequency: If the information required for the task changes frequently, RAG is better because it can access the latest data. Fine-tuning requires retraining the model whenever the underlying data changes significantly.

- Computational Resources: Fine-tuning typically requires more computational resources upfront for training. RAG requires resources for maintaining and querying the knowledge base.

- Transparency and Explainability: RAG provides greater transparency by showing the source of information used to generate a response. Fine-tuning is less transparent, as the model's knowledge is implicitly encoded in its weights.

Innovation in Design, Development, and More with AI

Both fine-tuning and RAG are powerful tools for driving innovation across various domains:

Design

In UI/UX design, fine-tuning can be used to create AI models that automatically generate design mockups based on user requirements. RAG can be used to provide designers with access to a vast library of design patterns and best practices, helping them make informed decisions.

Development

In software development, fine-tuning can be used to create code generation models that automate repetitive coding tasks. RAG can be used to provide developers with access to a comprehensive knowledge base of APIs, libraries, and frameworks, improving their productivity and code quality.

Startups and Individual Creators

Startups and individual creators can leverage fine-tuning and RAG to develop innovative AI-powered products and services. For example, a startup could use fine-tuning to create a personalized learning platform that adapts to individual student needs. An individual creator could use RAG to build a chatbot that provides expert advice on a specific topic.

The Future of AI: Hybrid Approaches

Looking ahead to 2026 and beyond, a hybrid approach that combines the strengths of both fine-tuning and RAG is likely to become increasingly popular. For instance, a model could be fine-tuned on a general domain and then use RAG to access real-time information relevant to a specific query. This would allow the model to leverage both its existing knowledge and the latest information available.

Conclusion: Making the Right Choice for Your AI Needs

In 2026, the choice between fine-tuning and RAG will depend on your specific application's needs. Fine-tuning excels when you have ample, relevant data and require task-specific performance improvements. RAG shines when you need access to real-time information and prioritize transparency. By understanding the strengths and limitations of each approach, companies, startups, and individual creators can effectively leverage AI to drive innovation and achieve their goals. As the field continues to evolve, experimenting with hybrid approaches will likely unlock even greater potential. Ultimately, the best solution is the one that aligns with your data, resources, and desired outcomes.